- Home

- About Us

- Work

- Journal

- Contact

- Axie infinity logo

- Final draft 11 crack clean windows

- Adobe character animator demo

- Battlefield 5 price

- Apple store columbia sc

- Hero of the kingdom 3 best way to make money

- Fujifilm xpro

- Swifty and takarita

- Darkest dungeon best team

- Avidemux video editing

- Synfig studio audio merging

- Easeus data recovery wizard professional 9-0

- Noteledge cloud

- Ntfs 3g android

- Government grant monies

- Need for speed shift soundtracks

- Rootsmagic sync with ancestry

- Civilization revolution 2

- Passpartout the starving artist crack mac

- Home

- About Us

- Work

- Journal

- Contact

- Axie infinity logo

- Final draft 11 crack clean windows

- Adobe character animator demo

- Battlefield 5 price

- Apple store columbia sc

- Hero of the kingdom 3 best way to make money

- Fujifilm xpro

- Swifty and takarita

- Darkest dungeon best team

- Avidemux video editing

- Synfig studio audio merging

- Easeus data recovery wizard professional 9-0

- Noteledge cloud

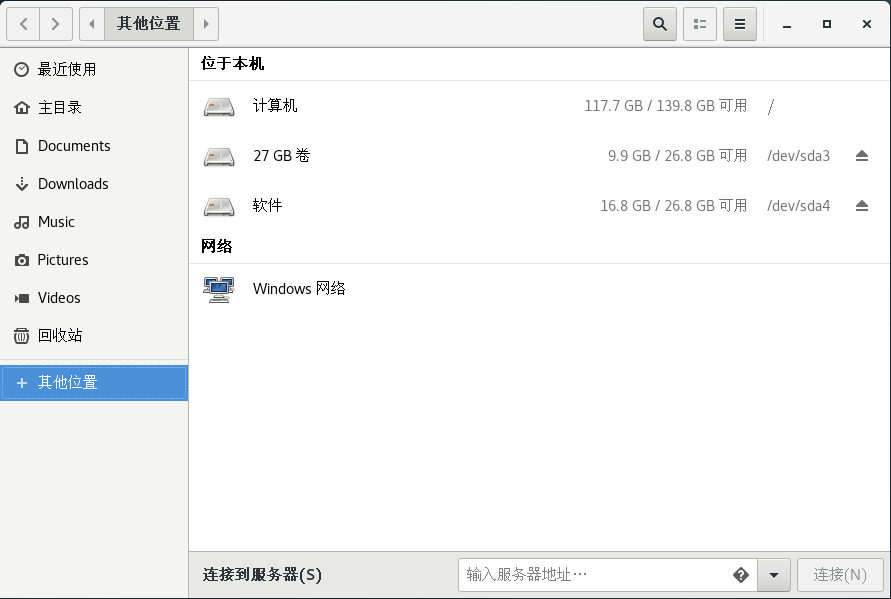

- Ntfs 3g android

- Government grant monies

- Need for speed shift soundtracks

- Rootsmagic sync with ancestry

- Civilization revolution 2

- Passpartout the starving artist crack mac

Clearly, this will end up as delivering “exFAT support” in the Linux kernel. This all makes the future of Microsoft’s exFAT initiative quite vague. This also raises the question to a commercial customer on why they would pay to help others, who may well be competitors. If a commercial player commits anything to Linux publicly as an outcome of work for hire for a specific customer, there is no way to make money out of it in the future since it becomes available to anyone on a royalty-free basis. They’re limited to non-redistributable commits only, which is a pretty low priority case in the real world.

NTFS 3G ANDROID CODE

One of the major requirements of the GPL license is to disclose changes to source code in case of further distribution, making it pointless for commercial players to participate. This limits the contribution to such projects almost solely to the open source community itself. It’s obvious that building such an environment can take years, if not decades, and the main thing here is not to know how something should work according to specs, but to know how and where exactly it fails. In other words, the main problem is not the resources needed to develop the code, the main problem is time needed to build up a reliable test-coverage that will provide a sufficient barrier for data-loss bugs.Īnother problem with open source is that it is usually accompanied by a GPL license. This assures a careful track record and in-depth analysis for every failure, as well as effective work-flow, making sure any given bug or failure never repeats. The core to success in developing a highly reliable solution is a carefully nurtured auto-test environment.

NTFS 3G ANDROID SOFTWARE

The open source community is successful, though it has been in create open source programs and platforms, is still no guarantee of industrial-grade software development (3).

The root of the problem is in the variety of real-life situations where bugs and failures may occur and lead to a data-loss situations, which is a total no-go in the real world. The main reason is that in all of these cases, data structure specs and the description of algorithms are not the most important piece of the picture. So, why didn’t the open source model work in these three cases? There are several commercial implementations of SMB as soon as a commercial level of support is required.

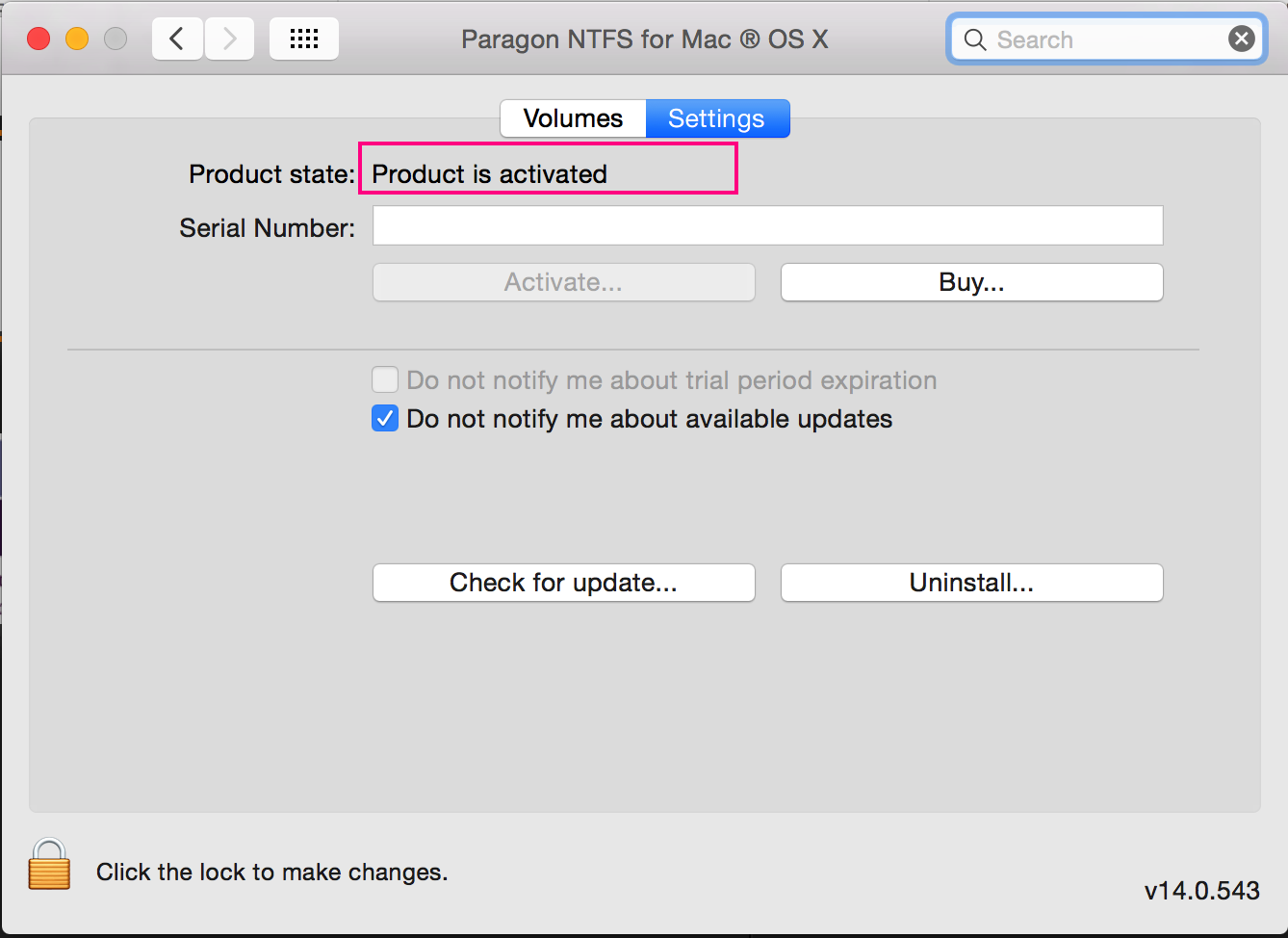

NTFS 3G ANDROID MAC

Mac OS, as well as the majority of printer manufacturers, do not rely on an open-source solution. One can activate write support, but there’s no guarantee that NTFS volumes won’t be corrupted during write operations.Īn additional example, away from filesystems, is an open source SMB protocol implementation. That appears strange given the existence of NTFS-3G for Linux.

The other case is Mac OS, which is another Unix derivative that still does not have commercial support for NTFS-write only supports NTFS in a read-only mode.

NTFS 3G ANDROID ANDROID

This shows the inability (or unwillingness based on the realistic estimation of a needed effort) of software giant Google to make its own implementation of a much simpler FAT in the Android Kernel.

The most sound case is Android, which creates a native Linux ext4FS container to run apps from FAT formatted flash cards (3). Let’s first look into some cases where filesystems similar to exFAT were supported in Unix derivatives and how that worked from an open source perspective. They’ve made exFAT specs available to the general public (though still hiding transactional exFAT specs (2) away from public eyes) and they’ve promised (1) an exFAT patent fee exemption for OIN members. Whatever Microsoft’s reasons for doing so, the consequence of this exFAT change is not at all evident at this stage. This is an interesting example of a change to the exFAT ecosystem that has been mostly proprietary for almost two decades. Last year, Microsoft announced support for the inclusion of the exFAT technology into the Linux Kernel (1).